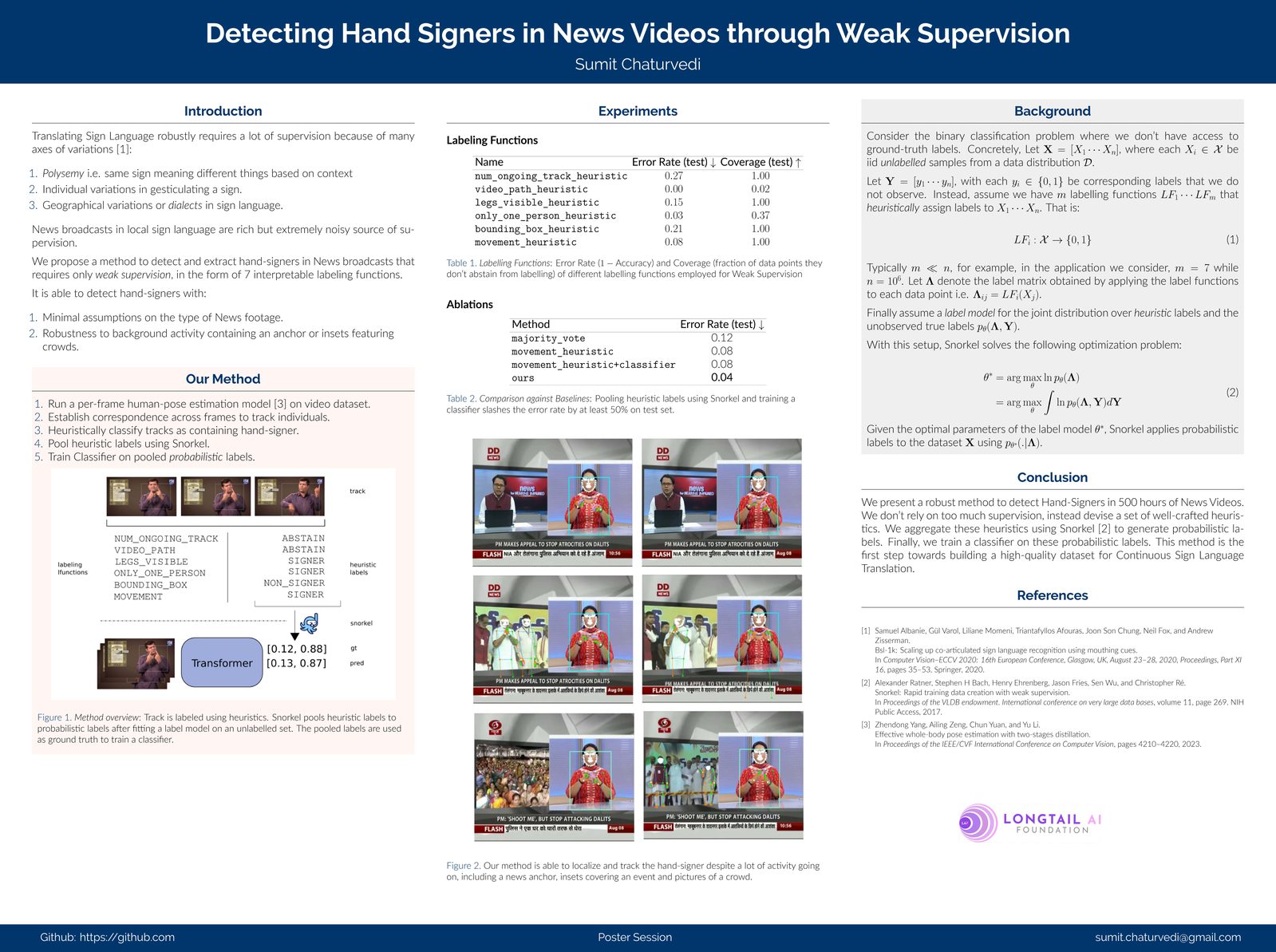

Signer detection through weak supervision

A practical bottleneck in collecting Indian Sign Language data at scale is finding the signer in the frame. News broadcasts and educational videos often inset a small signer in a corner of the screen, but the position, size, and styling vary across producers. Hand-labelling every minute of footage to extract these regions does not scale.

We are experimenting with weak supervision to bootstrap a signer detector — combining cheap heuristics (motion, skin-tone proxies, location priors) with a small amount of clean labelled data, then iteratively refining the labels with the model’s own predictions. This lets us turn many hours of unlabelled video into usable training material with very little human review.

The poster below summarises our approach and early results.

If you are working on similar problems in low-resource sign-language video, we would love to hear from you — info@longtailai.org.